Master the complexity of with the CEO Guide to ISO 42001. This executive guide breaks down risk management, data quality, and the certifiable framework required for responsible AI leadership in the agentic era.

Key Takeaways

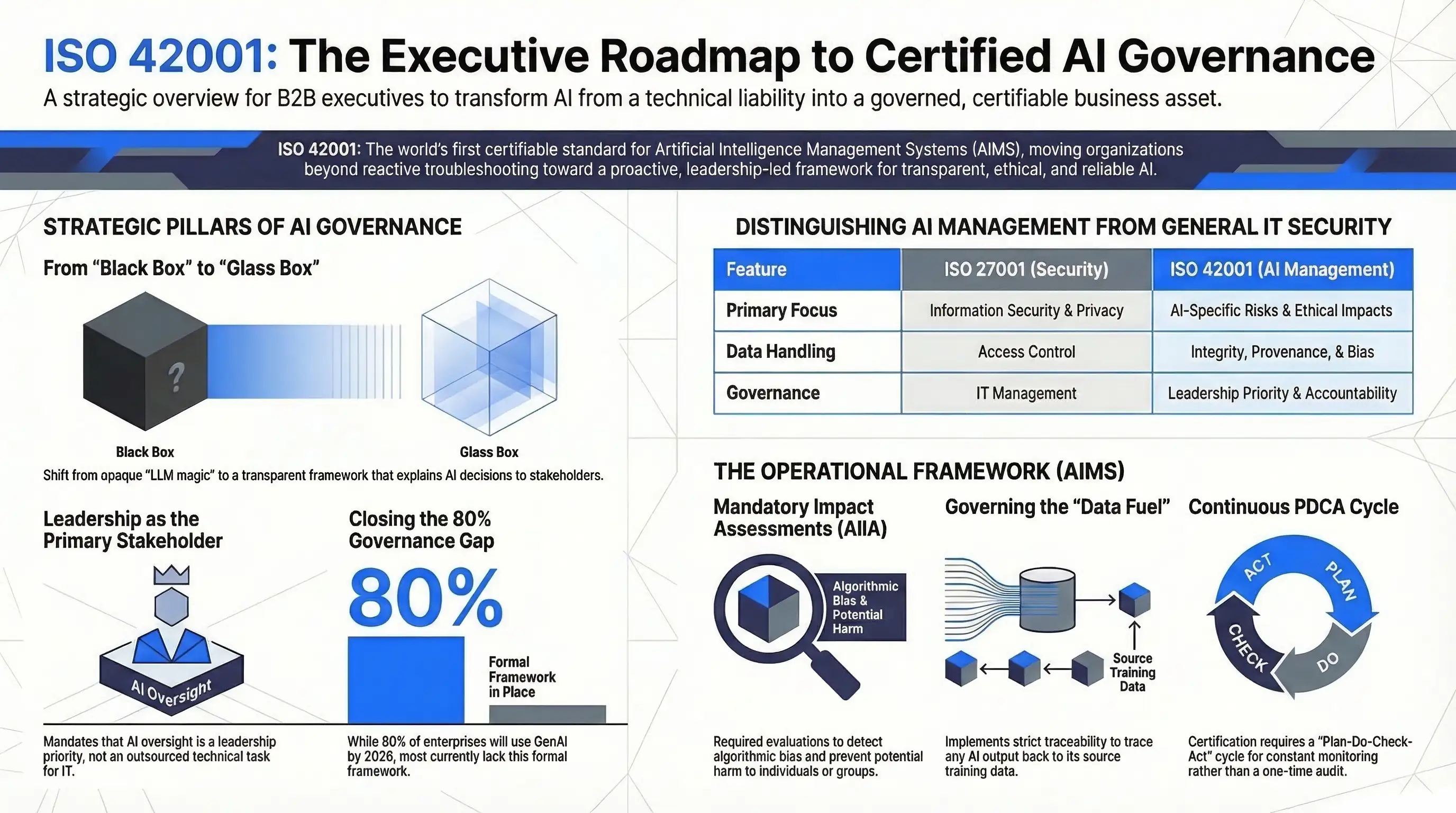

- ISO 42001 is the first global, certifiable standard dedicated to Artificial Intelligence Management Systems (AIMS).

- It shifts focus from reactive troubleshooting to proactive Risk Management across the entire AI Lifecycle.

- The standard mandates high Data Quality and Traceability, ensuring AI “fuel” is unbiased and accurate.

- Leadership is held accountable through clear Roles and Responsibilities and “meaningful human oversight”.

- Certification requires a continuous Plan-Do-Check-Act cycle rather than a one-time audit.

What is ISO 42001?

ISO 42001 (formally ISO/IEC 42001:2023) is a comprehensive international standard that provides a structured framework for managing Artificial Intelligence risks and opportunities within an organization. It enables businesses to develop and deploy AI systems responsibly, ensuring transparency, accountability, and ethical integrity.

Introduction: The Executive Mandate for AI Governance

The Age of the Agentic Frontier

As a CEO, you are no longer just overseeing software; you are overseeing “agents” that make autonomous decisions. While the promise of a Revenue Boost through AI is immense, the legal and ethical stakes have never been higher.

80% of enterprises will have used GenAI APIs or deployed GenAI-enabled applications by 2026, yet many lack a formal framework for managing them, according to a recent Gartner study.

Why ISO 42001 is Your New North Star

The gap between AI capability and AI safety is widening. ISO 42001 closes this gap by moving beyond general IT security (ISO 27001) to address the unique “stochastic” nature of AI.

It provides you with the “Glass Box” needed to explain AI decisions to regulators, board members, and customers. As noted by Microsoft’s Chief Responsible AI Officer, “Governance isn’t a brake; it’s the steering wheel that allows you to go faster safely.”

Building a Defensible Data Moat

Imagine a world where your AI isn’t a liability but a certified asset. By adopting this standard, you implement Mandatory Artificial Intelligence Impact Assessments (AIIAs).

This ensures your proprietary data—your “fuel”—is governed with a level of Traceability that makes your AI outcomes reproducible and your market position defensible against “weak AI” upstarts.

Leading the Transition to AIMS

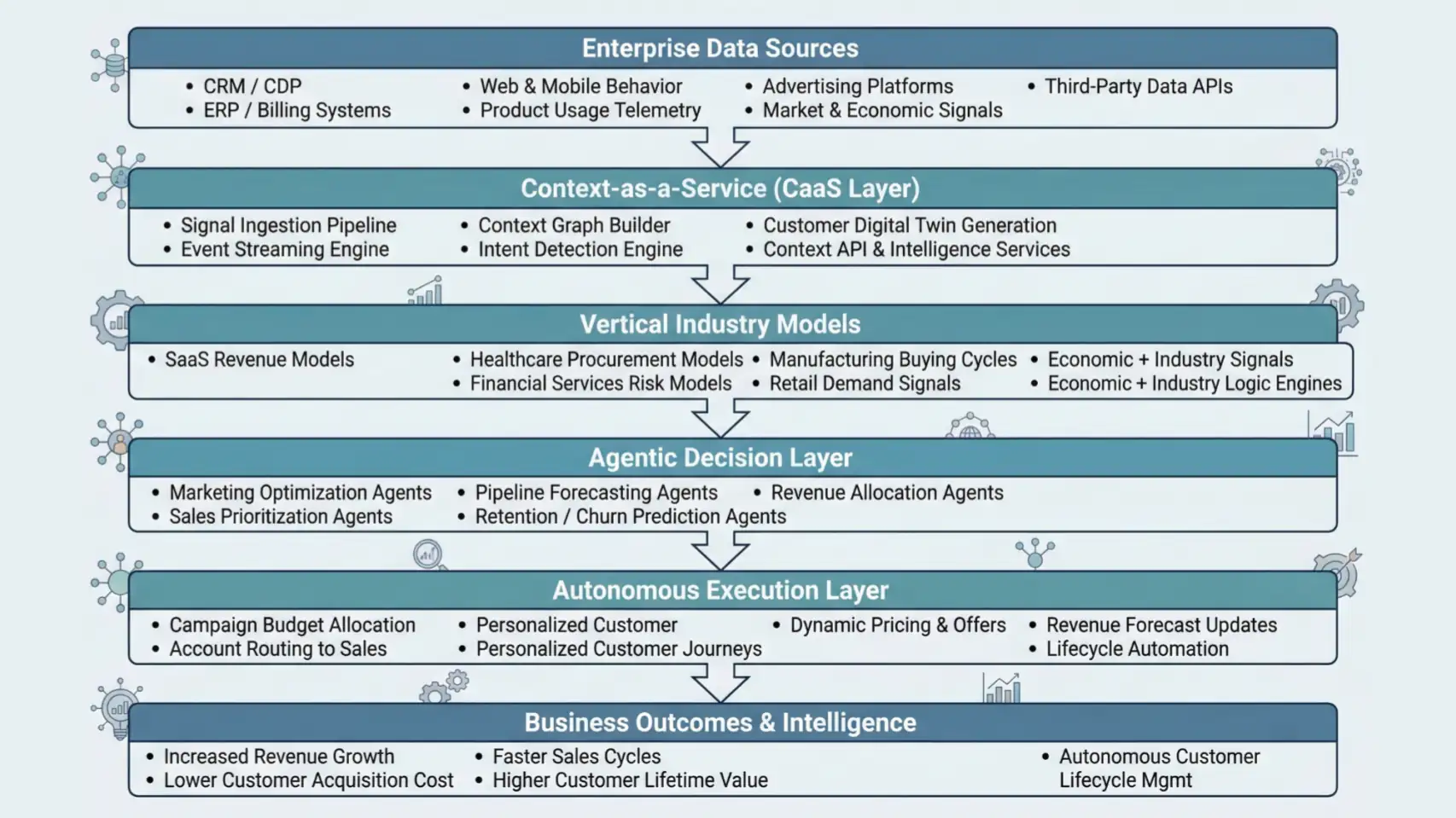

The transition from a “Chatbot” to a Native AI Vertical-Agentic Platform requires a foundational shift in leadership.

ISO 42001 mandates that AI oversight is a leadership priority, not just a technical one. By the end of this guide, you will have the roadmap to move from AI experimentation to a certifiable, high-authority Artificial Intelligence Management System.

The 5W’s of ISO 42001: The CEO’s Briefing

Who Needs to Lead the Charge?

Responsibility for ISO 42001 starts at the top. While the CTO manages the technical implementation, the CEO must define the Roles and Responsibilities for AI oversight.

The standard distinguishes between AI Providers (those developing models) and AI Users (those deploying them), requiring clear accountability for each.

What is Being Managed?

You are managing the AI Lifecycle—from the first line of code to the eventual retirement of the model.

This includes managing the integrity and provenance of data used for training and inference.

It’s not just about the code; it’s about the ethical, legal, and societal impacts of the AI’s output.

Where Does Implementation Occur?

Implementation occurs across the entire organizational fabric. It requires a Plan-Do-Check-Act cycle that integrates into your existing business processes.

From the data warehouse where “fuel” is stored to the front-end interface where “meaningful human oversight” occurs, ISO 42001 is omnipresent.

When is the Right Time to Start?

The “When” is now. With the EU AI Act and other global regulations looming, ISO 42001 serves as a “safe harbor” framework. Early adopters gain a competitive edge by proving to enterprise clients that their AI is audited, transparent, and safe.

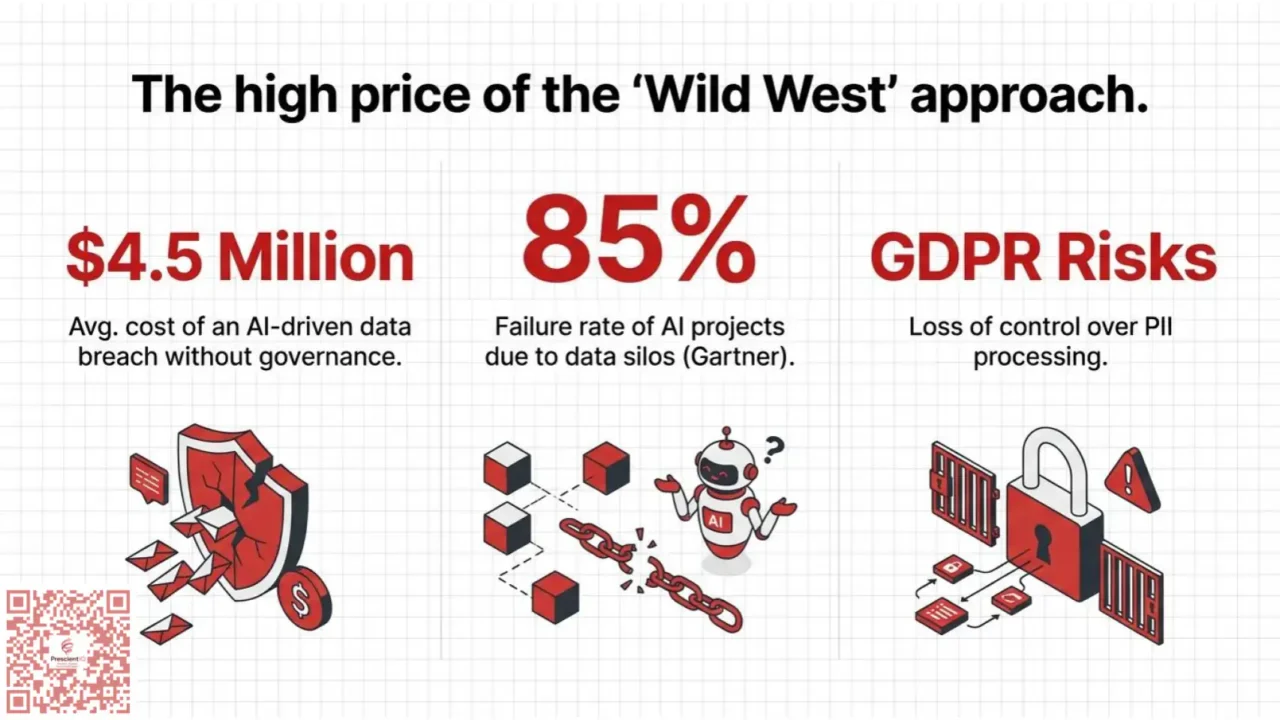

40% of companies are still hesitant to deploy AI due to data privacy concerns—certification removes this barrier, according to IBM’s 2024 Global AI Adoption Index.

Why Does This Matter for Your Bottom Line?

Beyond compliance, ISO 42001 is about Operational Alpha. It encourages the use of threat modeling tools to prevent costly failures like prompt injection or data poisoning.

By ensuring Data Quality, you reduce the OpEx costs associated with fixing biased or hallucinated AI outputs.

Forecasting ROI Simulator

See how 3-7% better accuracy impacts your bottom line.

3 Use Cases: ISO 42001 in Action

Use Case 1: The Fintech “Lending Agent”

- A fintech company deploys an AI to approve loans. The model is a “black box,” and a sudden spike in rejected applications from a specific demographic triggers a regulatory investigation and a PR nightmare.

- By implementing ISO 42001, the company conducts Mandatory AIIAs to detect bias early. They maintain a Traceability log of the training sets. When queried, they can point to the specific data lineage that justifies the AI’s decision.

- The company avoids millions in fines and gains a reputation for “Fair AI,” leading to a 20% increase in new account sign-ups.

Use Case 2: The Healthcare Diagnostics Suite

- A hospital uses AI to prioritize ER patients. The AI fails to recognize a rare but critical symptom because the data “fuel” was poorly labeled, leading to a missed diagnosis.

- The hospital adopts the Data Governance controls of ISO 42001. They ensure Data Quality through strict labeling and acquisition protocols. They also mandate “meaningful human oversight,” ensuring a doctor can override the AI’s triage.

- Patient outcomes improve, and the hospital’s liability insurance premiums drop because its AI system is now a “Certified AIMS.”

Use Case 3: The SaaS “Agentic Customer Platform”

- A SaaS company’s AI agent mistakenly grants a 90% discount to thousands of users due to a prompt injection attack from a clever user.

- Using ISO 42001’s Risk Management methodology, the team utilizes threat modeling to identify vulnerabilities to prompt injection. They implement proactive mitigations throughout the AI Lifecycle.

- The platform remains secure, preserving margins and ensuring that “LLM magic” doesn’t turn into a financial liability.

How PrescientIQ Addresses ISO 42001

ISO 42001 is a process-based standard (similar to ISO 27001) that focuses on how an organization governs the entire AI lifecycle. Google handles this through three main pillars:

1. The Secure AI Framework (SAIF)

Google mapped its existing SAIF to the ISO 42001 requirements. This framework ensures that the “Agentic” workflows you build on PrescientIQ are supported by:

- Infrastructure Security: Protecting the underlying TPU/GPU clusters.

- Model Integrity: Ensuring models aren’t tampered with during training or fine-tuning.

- Data Governance: Strict “Customer Data” boundaries where your industry-specific data is never leaked into the foundation models.

2. Risk Management & “AI Harms” Analysis

ISO 42001 requires proactive risk assessments. Google integrates this into PrescientIQ via:

- Evaluation Tools: PrescientIQ provides “Model Evaluation” services to test for bias, toxicity, and fairness—directly supporting ISO 42001’s requirement for Impact Assessments.

- Safety Filters: Built-in, configurable safety attributes that prevent agents from generating harmful or non-compliant industry content.

3. Continuous Compliance via “Control Navigator.”

To help you (the developer) stay compliant, Google introduced the Control Navigator for PrescientIQ.

- It provides a “Google Recommended AI Essentials” framework within the Compliance Manager.

- It automatically scans your PrescientIQ environment to detect “drift” (e.g., if an agent’s notebook is accidentally exposed to a public IP), which is a core requirement for the Surveillance Audits mandated by ISO 42001.

The Turnaround at AI11x

Lucia, the CEO of AI11x, a rising star in the Native AI space.

Challenge: AI11x was on the verge of losing its biggest Enterprise contract. The client’s legal team was terrified of “autonomous agents” having access to their ERP data.

They demanded to know: “How do we know the AI won’t go rogue?” Lucia’s engineers called it “LLM magic,” but to the client, it was a “Black Box” liability.

Solution: Lucia pivoted the entire company toward ISO 42001. She moved AI oversight from a “technical task” to a leadership priority. They implemented a Causal Inference Log (Traceability) and strict Human-in-the-loop protocols. They began performing Artificial Intelligence Impact Assessments for every new feature.

Results: Within six months, AI11x became the first in its vertical to be ISO 42001 certified. The “Black Box” became a “Glass Box.” Not only did they win back the Enterprise contract, but they also saw a 40% reduction in “hallucination-related” support tickets because their Data Quality was now governed, not just managed. Lucia didn’t just sell a product; she sold Trust.

How Does ISO 42001 Impact Your Strategy?

Direct Answer: ISO 42001 forces a shift from viewing AI as a “feature” to viewing it as a core Management System that requires constant auditing and accountability.

Is Risk Management Different in AI?

Yes, because AI risks are often non-linear and evolving. ISO 42001 requires a structured process for identifying risks throughout the AI Lifecycle. This isn’t just about technical bugs; you must assess ethical, legal, and societal impacts.

How Do We Govern the “Fuel”?

You govern the fuel through Data Integrity and Provenance. You must have controls for how data is acquired and labeled to ensure results remain unbiased.

Traceability is the key; you must be able to trace a mistake back to its source input.

What Tables Summarize the Standards?

| Feature | ISO 27001 (Security) | ISO 42001 (AI Management) |

| Primary Focus | Information Security & Privacy | AI-Specific Risks & Opportunities |

| Data Handling | Security & Access Control | Integrity, Provenance, & Bias |

| Governance | IT Management | Leadership Priority & Ethical Impact |

| Auditing | Static Security Controls | Continuous Lifecycle Monitoring |

| Risk Category | Example Threat | ISO 42001 Mitigation |

| Technical | Prompt Injection | Threat Modeling |

| Ethical | Algorithmic Bias | Mandatory AIIAs |

| Operational | Model Drift | Continuous Monitoring |

Implementing ISO 42001: A Step-by-Step Guide

- Establish Leadership Commitment: Define AI oversight as a leadership priority and assign clear Roles and Responsibilities.

- Define the Scope of the AIMS: Determine which AI systems are covered—whether you are an AI Provider or an AI User.

- Conduct an Initial AI Impact Assessment (AIIA): Evaluate potential harms to individuals, focusing on bias and loss of rights.

- Implement Data Controls: Establish protocols for data acquisition, labeling, and processing to ensure Traceability.

- Develop Human Oversight Protocols: Ensure “meaningful human oversight” is proportional to the risk of the AI task.

- Initiate the PDCA Cycle: Launch a “Plan-Do-Check-Act” cycle with regular internal and external audits.

Performing an ISO 42001 audit involves evaluating an organization’s Artificial Intelligence Management System (AIMS) against the structured framework of the international standard. Rather than a static, one-time check, the process is built on a continuous Plan-Do-Check-Act (PDCA) cycle.

The Audit Process Framework

An effective audit ensures that AI risks and opportunities are managed responsibly, maintaining transparency and ethical integrity.

1. Preparatory Review & Scope Definition

Before the formal audit begins, the organization must define the boundaries of the AIMS.

- Identify Roles: Determine whether the organization acts as an AI Provider (developer) or an AI User (deployer), as accountability requirements differ between the two.

- Verify Leadership Commitment: Confirm that AI oversight is treated as a leadership priority with clearly assigned Roles and Responsibilities.

2. Risk and Impact Assessment Evaluation

Auditors must examine how the organization identifies and mitigates risks throughout the entire AI Lifecycle.

- AIIA Review: Check for Mandatory Artificial Intelligence Impact Assessments (AIIAs) that evaluate potential harms to individuals, such as bias or loss of rights.

- Threat Modeling: Verify the use of tools to prevent technical failures like prompt injection or data poisoning.

3. Data Governance and Quality Audit

Because data is the “fuel” for AI, auditors scrutinize its management and origin.

- Traceability: Ensure there is a clear lineage to trace any AI mistake back to its source input.

- Data Controls: Review protocols for data acquisition, labeling, and processing to ensure results remain unbiased and accurate.

4. Operational Oversight and Monitoring

The audit evaluates the mechanisms in place to maintain “meaningful human oversight”.

- Human-in-the-loop: Confirm that oversight protocols are proportional to the risk level of the AI’s tasks.

- Continuous Monitoring: For operational systems, ensure ongoing monitoring of issues such as model drift to maintain long-term performance.

Summary of Audit Focus Areas

| Audit Area | Key Requirement | Objective |

| Governance | Leadership accountability | Ensure AI is not just an IT task. |

| Risk | Lifecycle-wide management | Address non-linear and evolving AI risks. |

| Data | Integrity and Provenance | Maintain high data quality and eliminate bias. |

| Oversight | Meaningful human control | Provide a “Glass Box” for explaining AI decisions. |

Next Steps for Your Organization

To prepare for a formal audit, you should conduct a gap analysis between your current AI workflows and the ISO 42001 requirements to identify your highest-risk areas.

Would you like a checklist for your initial internal gap analysis?

Conclusion: Your Next Steps Toward AI Authority

ISO 42001 is the bridge between the “Wild West” of AI and the future of Responsible Enterprise. By treating AI as a Management System rather than a software tool, you build a foundation of Trust that competitors cannot replicate through “speed” alone.

Key Learning Points:

- Risk is Lifecycle-wide: From design to retirement.

- Data is the Foundation: Quality and lineage are non-negotiable.

- Governance is Leadership: Accountability cannot be outsourced to the IT department.

Next Step: Conduct a gap analysis between your current AI workflows and the ISO 42001 requirements to identify your highest-risk areas.

People Also Ask (FAQ)

What is the main goal of ISO 42001?

The main goal is to provide a certifiable framework for managing AI responsibly, focusing on risk management, data quality, and accountability throughout the AI lifecycle.

Is ISO 42001 mandatory for all companies?

While not legally mandatory like the EU AI Act, it is the international gold standard. Many enterprise clients now require it to pass procurement and safety audits.

How does ISO 42001 differ from ISO 27001?

ISO 27001 focuses on general information security. ISO 42001 goes deeper into AI-specific issues like algorithmic bias, data provenance, and ethical impacts.

What is an Artificial Intelligence Impact Assessment (AIIA)?

An AIIA is a mandatory assessment under ISO 42001 that evaluates the potential harm an AI system may cause to individuals or groups.

Who is responsible for AI oversight in ISO 42001?

Leadership is responsible. The standard requires the definition of clear owners for AI oversight, distinguishing between developers (Providers) and deployers (Users).

References

- International Organization for Standardization (ISO/IEC 42001:2023).

- Gartner: Top Strategic Technology Trends for 2024-2026.

- IBM: Global AI Adoption Index Report.

- MatrixLabX: State of AI in the Enterprise.